The Second Coming or Slouching Towards Bethlehem? Algorithmic Biases and Responsible Uses of Generative AI

Things fall apart; The center cannot hold (with apologies to W.B. Yeats)

Since #generativeai is all over the news, I decided to spend some time to check out #dalle and #chatgpt. There are incredible potential and opportunities ahead, especially in the areas of product management, customer service, creative industries and customer facing services in general. I do worry about the bias and fairness issues in these services as well.

How Good is Generative AI?

Some have suggested that, while generative AI may not replace humans altogether, it can greatly speed up human cognition and aid tasks such as product development, ML Ops, model training etc. Building on this Fast Company interview with Thomas Davenport and Nitin Mittal, generative AI is “artificial intelligence that can generate novel content, rather than simply analyzing or acting on existing data. Generative AI models produce text and images: blog posts, program code, poetry, and artwork. The software uses complex machine learning models to predict the next word based on previous word sequences, or the next image based on words describing previous images. In the shorter term, we see generative AI used to create marketing content, generate code, and in conversational applications such as chatbots.”

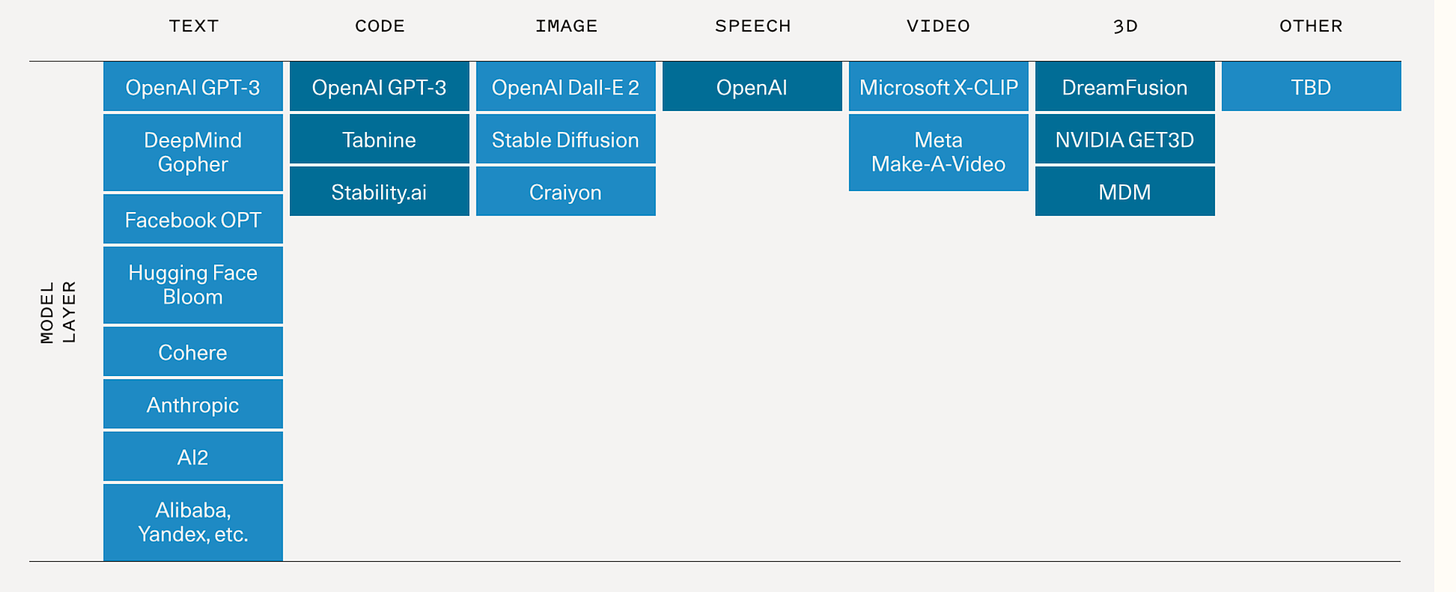

This is a description of the generative AI landscape from Sequoia Capital

Sequoia lists several applications, including:

Copywriting: The growing need for personalized web and email content to fuel sales and marketing strategies as well as customer support are perfect applications for language models. The short form and stylized nature of the verbiage combined with the time and cost pressures on these teams should drive demand for automated and augmented solutions.

Vertical specific writing assistants: Most writing assistants today are horizontal; we believe there is an opportunity to build much better generative applications for specific end markets, from legal contract writing to screenwriting. Product differentiation here is in the fine-tuning of the models and UX patterns for particular workflows.

Code generation: Current applications turbocharge developers and make them much more productive: GitHub Copilot is now generating nearly 40% of code in the projects where it is installed. But the even bigger opportunity may be opening up access to coding for consumers. Learning to prompt may become the ultimate high-level programming language.

Art generation: The entire world of art history and pop cultures is now encoded in these large models, allowing anyone to explore themes and styles at will that previously would have taken a lifetime to master.

Gaming: The dream is using natural language to create complex scenes or models that are riggable; that end state is probably a long way off, but there are more immediate options that are more actionable in the near term such as generating textures and skybox art.

Media/Advertising: Imagine the potential to automate agency work and optimize ad copy and creative on the fly for consumers. Great opportunities here for multi-modal generation that pairs sell messages with complementary visuals.

AI and Creativity

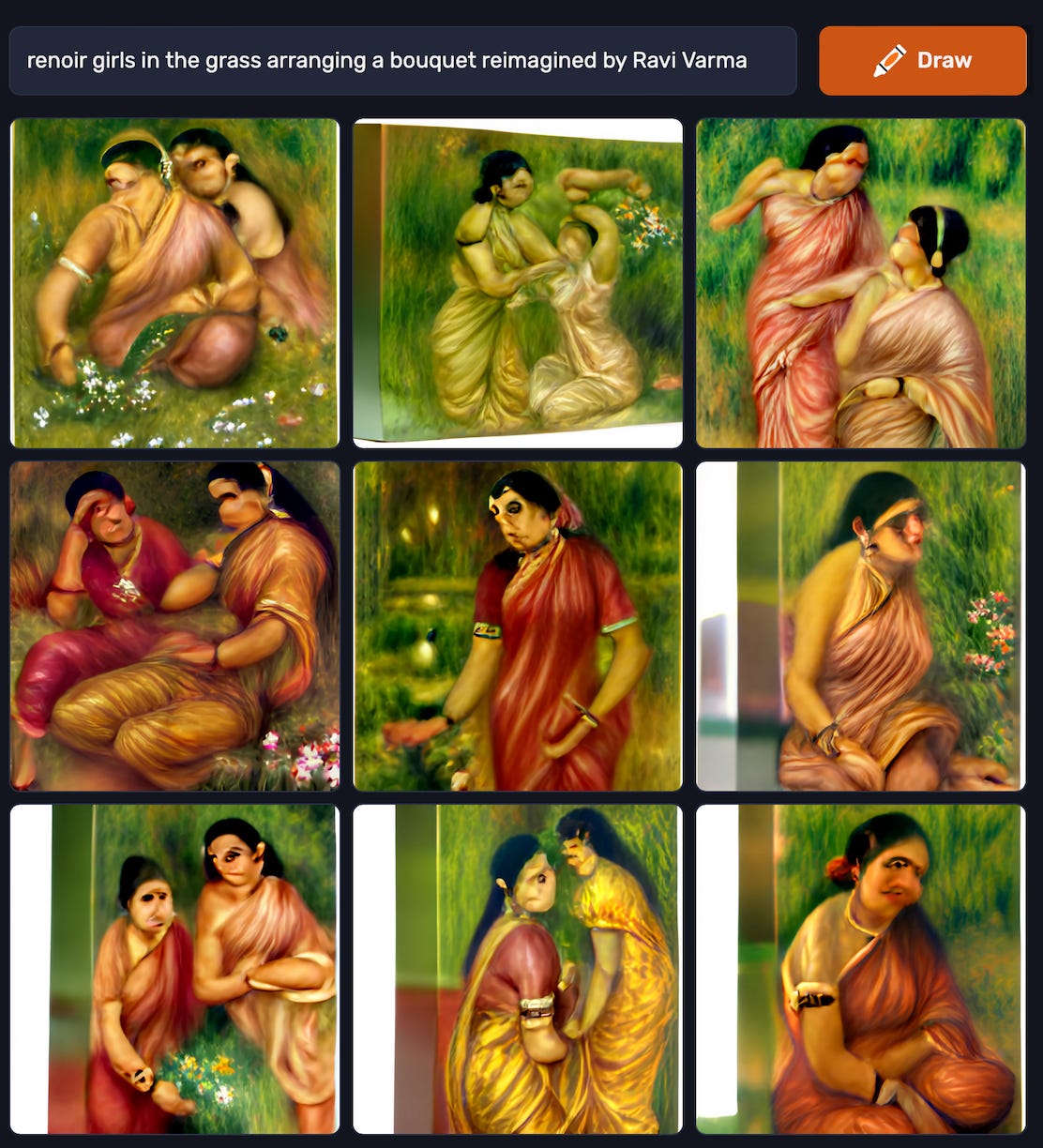

This is what I got with the prompt “Renoir’s Girls in the Grass reimagined by Ravi Varma” using Dall-E mini. What is not surprising is the outputs of generative AI depend greatly on the training data.

Not surprisingly, generative AI has sparked a lot of interest and creative ideas about its use.

Not surprisingly, there are questions of copyright and ownership. These models have been trained on gigantic repositories of data mined from online settings. DALL-E 2 was supposed to have been trained on a corpus of approximately 650 million image-text pairs scraped from the Internet, according to the paper that OpenAI posted. This does represent millions of hours of labor and creativity by illustrators and creative professionals. It is interesting in this regard to mention that Microsoft, GitHub and OpenAI are the subject of a class action lawsuit for allegedly violating copyright law by reproducing open-source code using AI. It will be interesting to watch these developments in what they portend for generative AI.

Another question is how we define AI creativity? This will be the subject of a future post. For now, I will confine my attention to issues of bias and fairness issues in generative AI.

The Values Encoded in Generative AI

This was what was generated by #chatgpt with the prompt: "how is responsible artificial intelligence different from algorithmic bias"

Algorithmic bias refers to the tendency of AI systems to produce biased or unfair outcomes due to the data and algorithms they are trained on. This can occur when the data used to train the AI system is itself biased, or when the algorithm is designed in a way that amplifies or reinforces existing biases. Algorithmic bias can have serious consequences, as it can lead to unfair treatment of individuals or groups, and can perpetuate and amplify societal inequalities.

Responsible artificial intelligence, on the other hand, involves taking steps to mitigate and address algorithmic bias, as well as considering the broader ethical and societal implications of AI. This can involve ensuring that AI systems are trained on diverse and representative data sets, and designing algorithms that are transparent and explainable, so that their decision-making processes can be understood and evaluated. Responsible AI also involves being transparent about the data and algorithms used to train and operate AI systems, and being accountable for their actions and decisions. It requires collaboration between multiple stakeholders, including researchers, developers, policymakers, and the public, to ensure that AI is developed and used in a way that is beneficial to society.

While prompts like the one above represent a good attempt to automate summaries of current literature or what we know from repositories such as Wikipedia. For now, these are proprietary systems that cannot be openly scrutinized by the public. A few journalists and researchers sent in queries and requests that produced disturbing results. IEEE Spectrum for example requested that DALL-E 2 perform a query for an image of “a technology journalist writing an article about a new AI system that can create remarkable and strange images.” In response, it sent back only pictures of men.

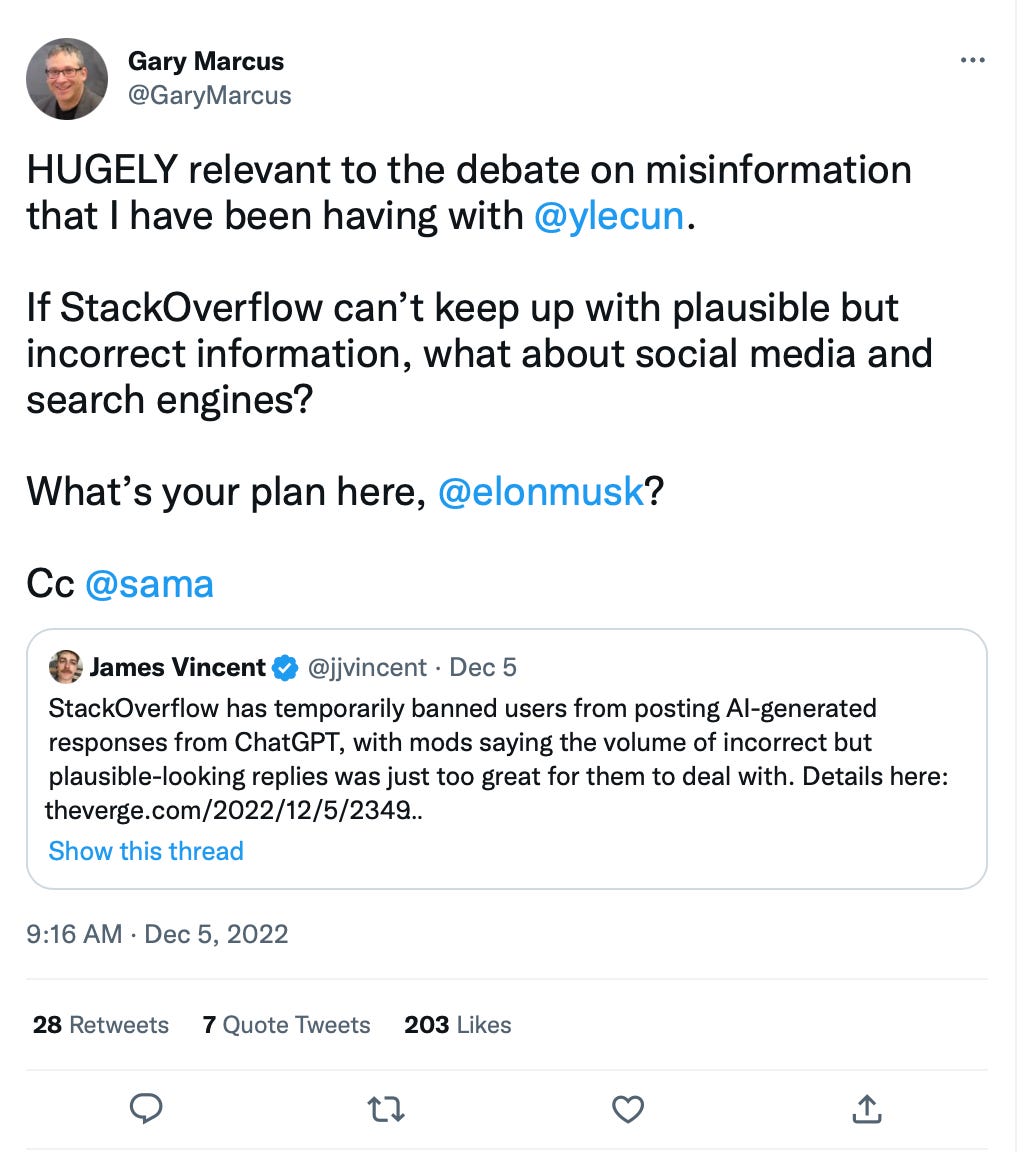

Some of the new applications of generative AI seem far, far worse. Slate reported one investigation where a writer requested Davinci-003 to write his own obituary which resulted in the AI spewing out bizarre lies, including fabricated references and Wikipedia entries of pages that did not exist. There have been several discussions on Twitter and Reddit about how ChatGPT is fabricating fake references and bibliography. The challenge then is not with the ability of AI to generate sample data but our ability to authenticate and establish credentialed information at scale. Realizing the scale of this problem, Stack Overflow , a very popular Q&A site for coders and programmers, has temporarily banned users from sharing ChatGPT responses. As Gary Marcus pointed out, “If StackOverflow can’t keep up with plausible but incorrect information, what about social media and search engines?”

This echoes critiques by philosophers such as John Searle who argues against the Turing test being a test of machine intelligence, on the grounds that programming a digital computer may make it appear to understand language but does not produce real understanding.

While industry observers are already hailing ChatGPT as the death of Google search, Chirag Shah and Emily Bender pointed out in a recent paper that critical functions of search are to provide “information verification, information literacy, and serendipity,” values that seem to be absent from current discussions of Generative AI.

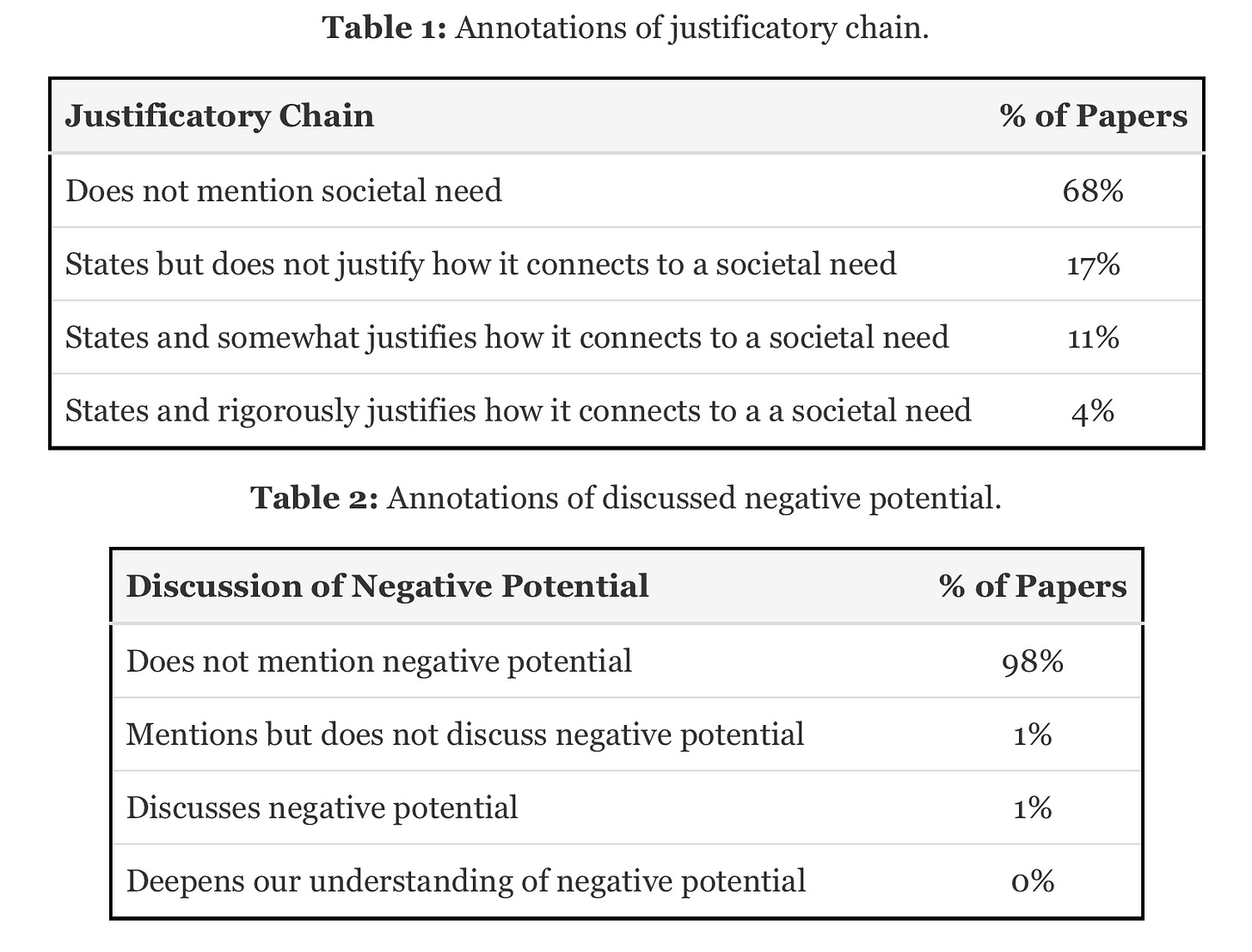

In this regard, I found recent work by Abeba Birhane and co-authors1 a great way to think about encoded values of ML. Their analysis of values highlighted in machine learning research finds that values such as "Performance, Generalization, Quantitative evidence, Efficiency, Building on past work, and Novelty" dominate as justifications for research. Table below reproduced from Birhane et al. 2022.

Amidst all the hype about generative AI, it is incumbent on us to ensure that the second coming of AI does not turn into a version of Yeats’ “Slouching Towards Bethlehem” by ensuring that the societal and ethical implications of AI are not forgotten.

Abeba Birhane, Pratyusha Kalluri, Dallas Card, William Agnew, Ravit Dotan, and Michelle Bao. 2022. The Values Encoded in Machine Learning Research. In 2022 ACM Conference on Fairness, Accountability, and Transparency (FAccT '22), June 21–24, 2022, Seoul, Republic of Korea.ACM, New York, NY, USA 12 Pages. https://doi.org/10.1145/3531146.3533083