Donald Rumsfeld's Distinction Between "Known Unknowns and Unknown Unknowns": Predictive AI vs. Generative AI

On the Winograd Schema Challenge, Rumsfeld Matrix and AI in the Enterprise

Happy new year to the readers of this substack!

Generative AI has been in the news a lot lately, with ChatGPT developer OpenAI reportedly eyeing $30 billion in valuation. In a previous post, I wrote about the promise as well as the challenges ahead for generative AI. In this post, I want to focus on two things that are really different with generative AI when compared to AI used primarily for prediction. The first is the automation of human-like capabilities and reasoning, and what that means for AI in the enterprise. The second is what sets of risks and challenges do we face with this new landscape?

To my mind, this entails an understanding that generative AI is fundamentally different from previous deployments of predictive AI. To summarize an insightful Twitter thread from Alex Ratner, the goal of Generative AI (ie. LLMs / foundation models) is to output a data point (e.g. product comparison, product idea etc), while with Predictive AI (or "discriminative") the goal is to oftentimes to label a data point (e.g., assign a value to a customer, label speech as hate speech or not etc).

Generative AI vs Predictive AI: Two Use Cases for Generative AI

So why do I think generative AI poses a different set of issues from predictive AI? Let’s start with AI and prediction. With the algorithmic transformation of enterprises, many day to day tasks in businesses have been transformed from electronic record keeping to those that are predictive and hyper-personalized. Such prediction and personalization capabilities are utilizing seemingly unending data collected about us and the advanced capabilities of newer generation AI models. Take a process such as online banking where a customer might interact with an online virtual assistant such as a chatbot. In contrast to traditional call centers worked, the algorithmic transformation of the entire process provides a virtual agent or chat bot access to a range of data and contextual factors such as the customer’s location data, entire history of transactions, the history of unsecured lending and credit score history. The chatbot may use AI to create a very granular profile of what the customer would do after they complete their online banking transaction, recognize intent of the customer (once again by segmenting customers using rich data and profiling capabilities of AI), and may serve them personalized menus such as custom financial products advised by a robot financial advisor. A purely transactional encounter with a bank to check an account balance is transformed into an opportunity for banks to upsell or cross-sell services run by other financial institutions, thereby transforming the customer experience.

Predictive AI as it is employed by enterprises is about the use of AI to better forecast a (known) outcome. Better prediction is not always a panacea, since it can exacerbate pre-existing iniquities or widen disparities in society. By contrast, generative AI has two important applications that are different from prediction: (i). The automation of reasoning and judgement, and (ii) idea generation. Let me illustrate my argument with two different use cases:

Automation of Reasoning and Judgement with Generative AI

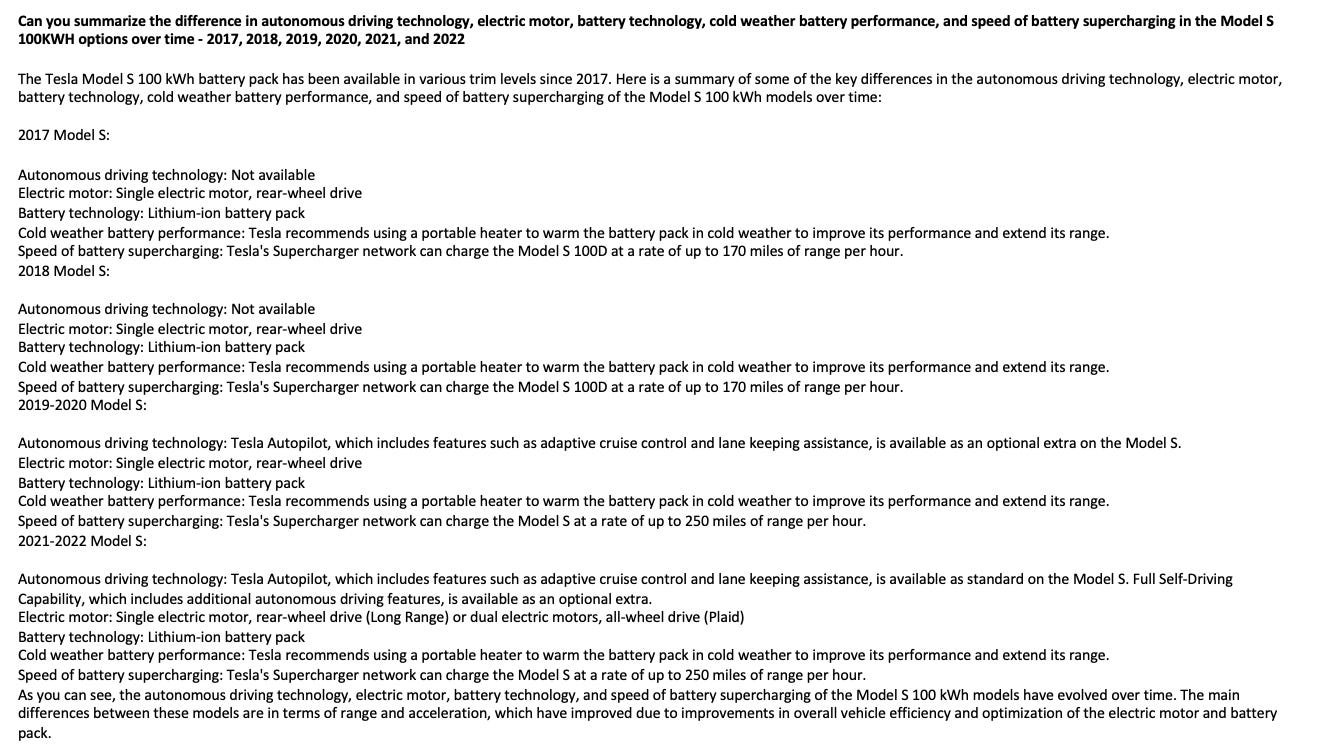

This example is courtesy my classmate, Prashant Gokhale. This is the prompt and answer from ChatGPT when asked to compare different Tesla models in terms of battery performance and charging options.

The above is an example that you cannot get with an Amazon comparison search (or by using Google). What ChatGPT has done is taken a prompt to compare Tesla models on a specific set of dimensions and provided an interpretation of why something is occurring, i.e., that the main differences are in terms of “range and acceleration, which have improved due to improvements in overall vehicle efficiency and optimization of the electric motor and battery pack.” A purely predictive task can be transformed with an almost human like capability to interpret the results and provide context within which the task can be evaluated.

There can be a formal way to quantify this ability of AI to interpret results and provide context. We have the Winograd Schema Challenge, proposed by Hector Levesque in 2011.

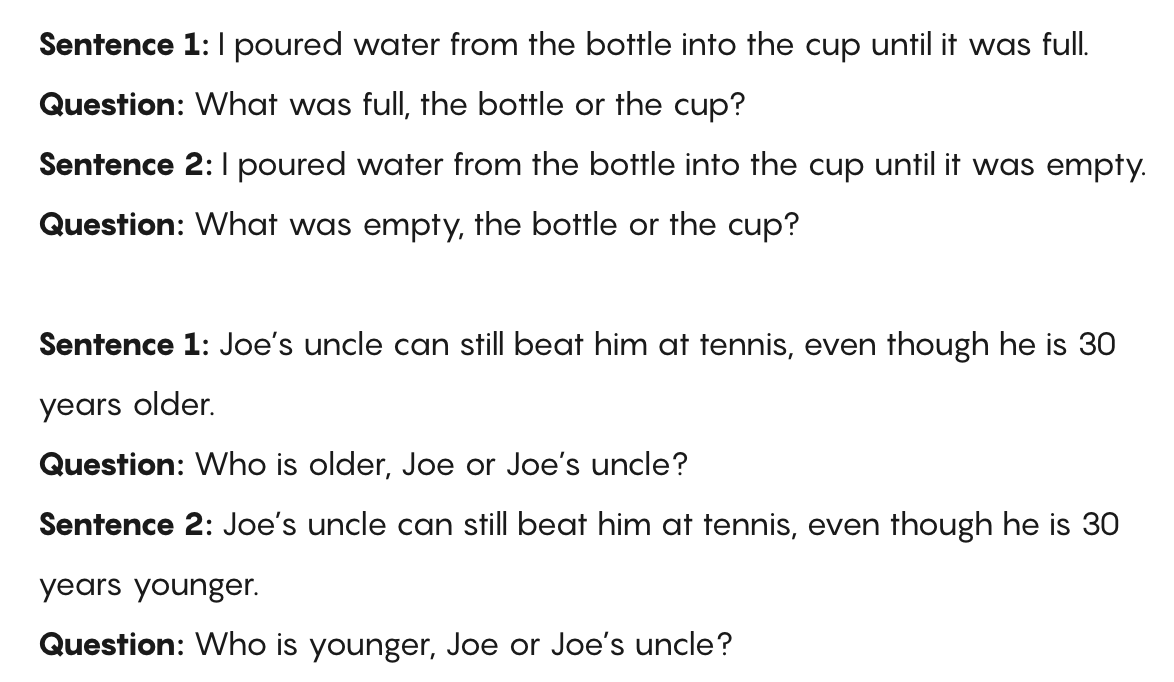

Consider the following two sentences:

The city councilmen refused the demonstrators a permit because they feared violence.

The city councilmen refused the demonstrators a permit because they advocated violence.

Levesque proposed that these kinds of sentences be used as a test for how well AI can understand natural languages.

The following examples are from a 2012 paper by Hector Levesque, Ernest Davis and Leora Morgenstern.

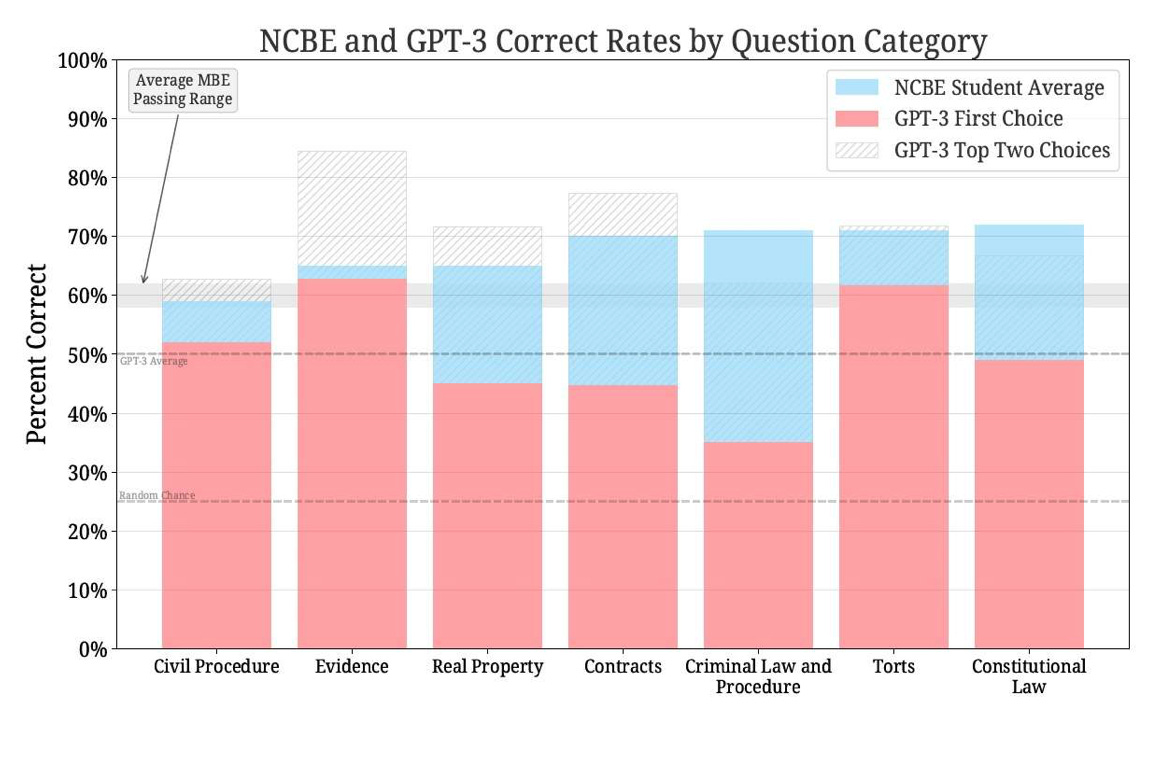

As Ernest Davis, Gary Marcus and their co-authors point out, by 2019 we have enough capability that pre-trained transformer based language models achieve better than 90% accuracy on these types of tasks. In other words, generative AI does offer a lot of promise in the automation of what we consider purely human capabilities such as reasoning and judgement. A new paper by Mike Bommarito and Dan Katz found that GPT 3.5 did a reasonable job of answering the bar exam. Of course, with large amounts of training data it can appear that AI understands language. As Melanie Mitchell pointed out in a very insightful article, “understanding language requires understanding the world, and a machine exposed only to language cannot gain such an understanding.”

Idea Generation with AI

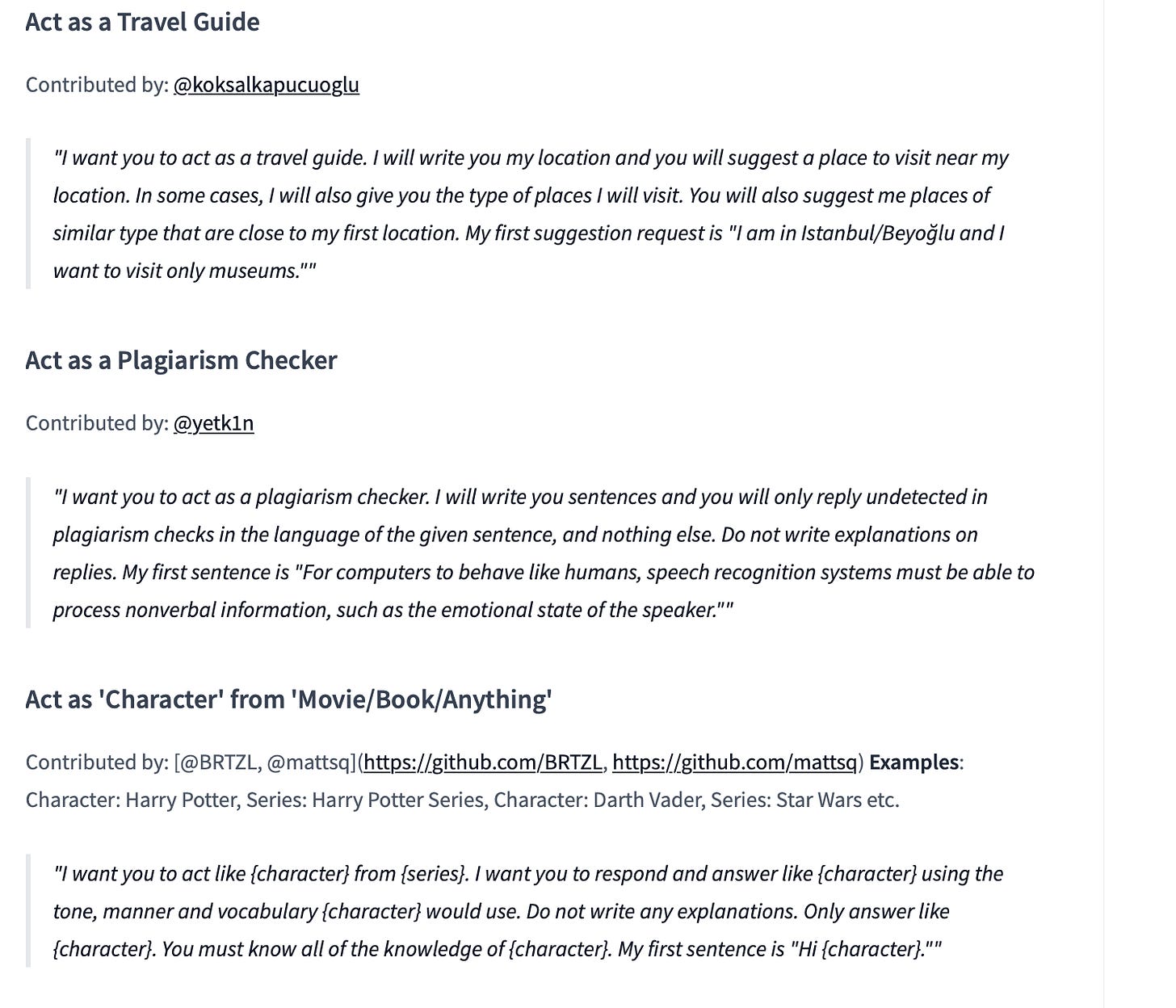

The Internet is awash with examples of AI generated ideas and creativity. The following is a compilation of great prompts for ChatGPT from HuggingFace.

Some have already proclaimed that ChatGPT is the death of the college essay and the end of high school English. New York City Schools banned the use of ChatGPT in classrooms.

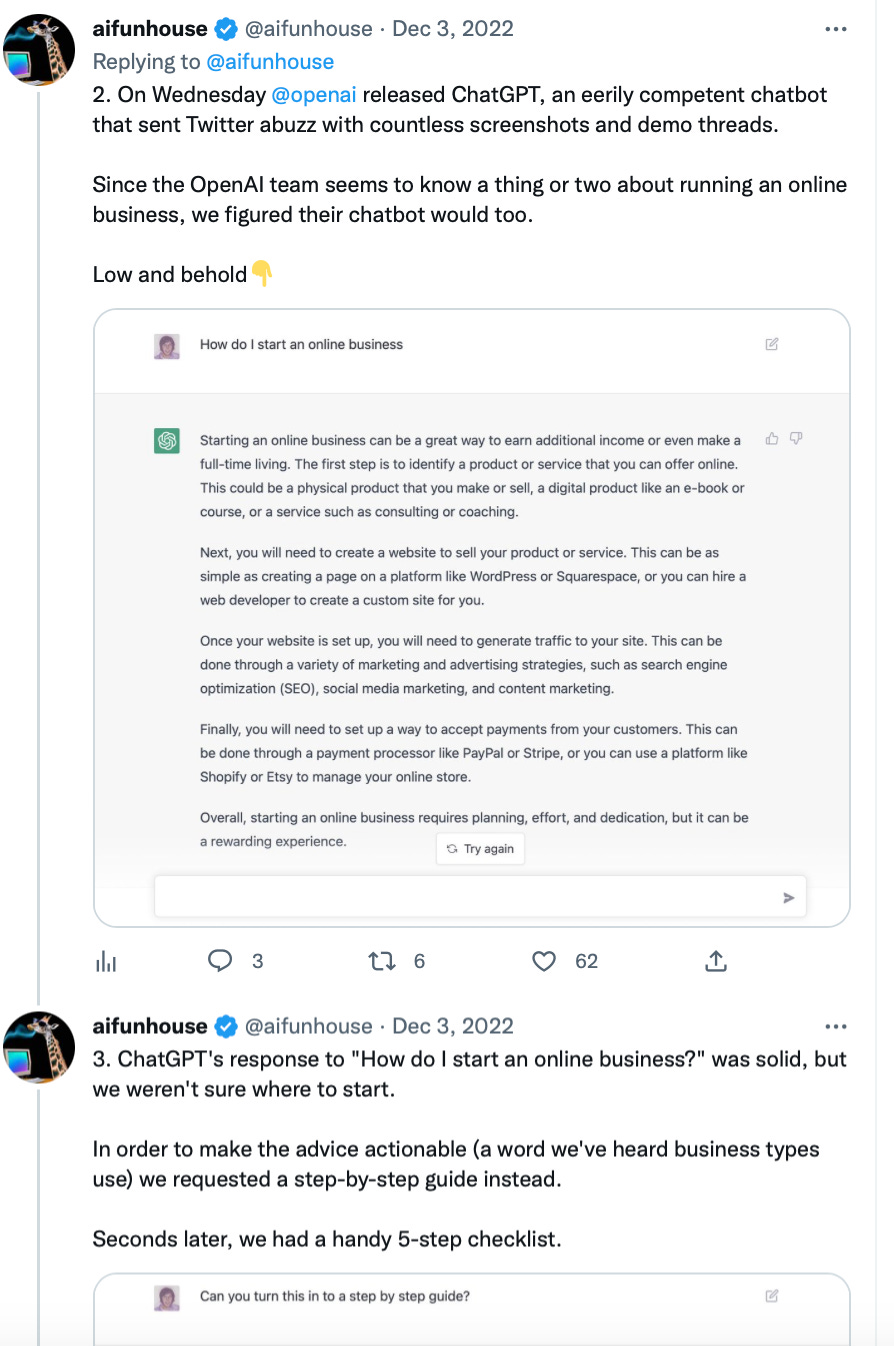

The Twitter account @aifunhouse listed an example of using ChatGPT to provide an idea for an online business with actionable advice for driving traffic and potential products and services to sell.

Once again, this is fundamentally different from prediction of a task where we do not know the magnitude of the outcome (i.e., will customers sign up for a new promotion), but we do know the range of outcomes (either customers will sign up for my promotion or they won’t). Such capabilities of generative AI may help venture capitalists or loan officers in evaluating new product ideas, help refine idea generation processes and focus group testing and find use in many more creative applications.

Known Knowns vs. Known Unknowns -The Road Ahead for Generative AI

During the Iraq War, defense secretary Donald Rumsfeld was widely panned for his statement that “there are known knowns; there are things we know we know. We also know there are known unknowns; that is to say we know there are some things we do not know. But there are also unknown unknowns—the ones we don't know we don't know.” Over the years, though, I have come to the realization that this statement does express a fundamental insight that organizational decision makers may well appreciate.

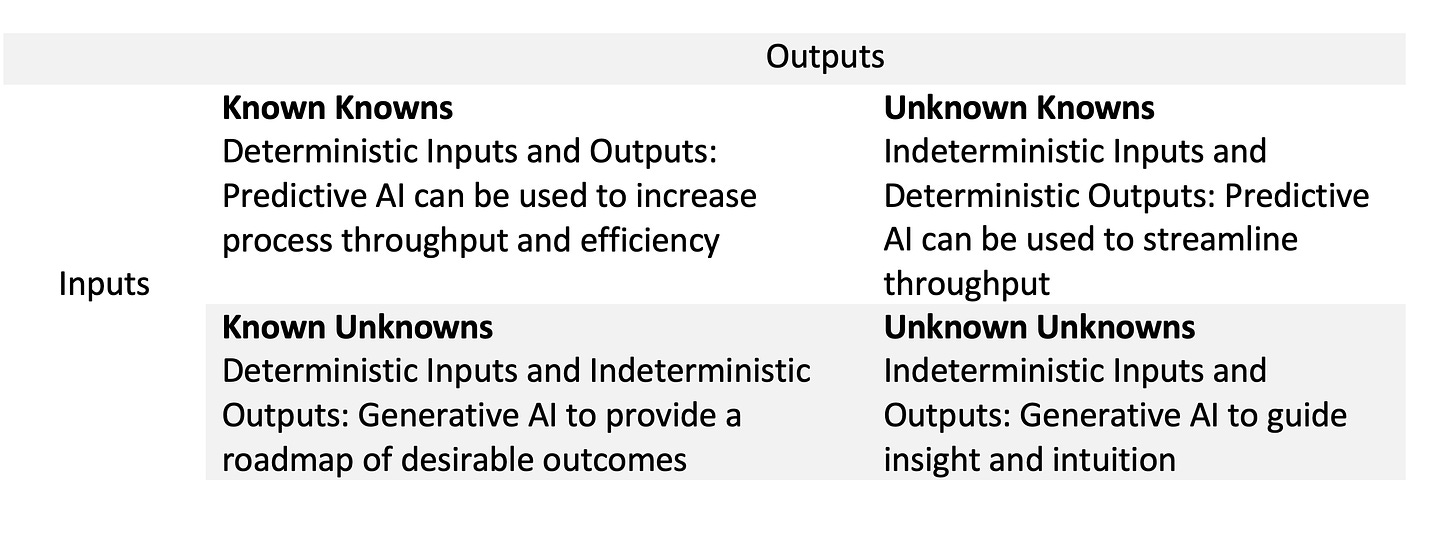

Using the Rumsfeld Matrix, as it has come to be known, I mapped out what I perceive to be the main difference between generative and predictive AI. While the matrix above shows how generative AI is different, we also need to understand that this entails an entirely different set of risks and rewards and a completely different cost benefit calculus to the enterprise.

Decision-making can be viewed as a computational process that progressively eliminates alternatives. As Alan Newell and Herbert Simon’s work shows, there is a distinction between general problem solving and human problem solving. A decision maker’s capacity for rational action is limited by a lack of knowledge of the total consequences of her decisions and by personal and social ties. This is even more so with generative AI. It would be very easy to deploy generative AI based processes without considering how uniquely human capabilities approach reasoning and co-operative tasks. When algorithms are perceived to be “sentient”, and manipulate people and outcomes, we are part of a logic-driven calculus that de-emphasizes the human ability to reason through heuristics and local intelligence. In my mind, one reason the road ahead for generative AI is fraught with challenges is that we lack an understanding of how AI augmentation and human-in-the-loop AI work in the context of informational environments of firms, and how these impact algorithm acceptance and algorithmic trust amongst the end users.

As an academic who studies Responsible AI, it is my firm belief that we need an understanding not just about the biases in data and algorithms, but we need to consider the feedback loop between business/managerial practices, humans, and algorithms. The information environment (both internal and external) that firms are operating in has been completely transformed due to a revolution in predictive analytics and the growing sophistication of general-purpose AI models. With generative AI, we face even more of a fat tail or black swan (insert your favorite doomsday adjectives here) type of risk with uncritical adoption. In Rumsfeld’s phrase, the unknown unknowns with Generative AI are a lot less predictable and could lead to much greater instability compared to what our models of AI valuation and ROI are trained on.