When My Algorithm Swears She is Made of Truth: On Algorithmic Interfaces and Algorithmic Anxiety

On algorithmic recognizability and human-in-the-loop algorithmic cognition

There is a cute scene in the romcom “You Got Mail” where the heroine Kathleen Kelly, played by Meg Ryan, ends up in a cash only checkout line during holiday season without cash. The hero, who also happens to be her business rival, charms the checkout counter employee into letting the heroine use her credit card. Aside from the opportunity to let the leads engage in some witty repartee, the scene also evokes a sense of nostalgia for the Upper West Side where one can serendipitously run into business rivals or romantic interests!

Romantic comedy plot points aside, this is a lost world given our current algorithmic reality. All of our interactions are algorithmically mediated, consumers are increasingly shopping online or use curbside pickup and stores have AI assisted chatbots and self-checkout counters. In today’s world, Kathleen Kelly would use a shopping app and have her groceries either delivered to her or pick them up curbside, so modern romcoms can dispense with meet cute ideas at the checkout line. AI has transformed the world of retail with personalization and automation1.

What is distinct about information seeking in our algorithmically engineered world is that users are steered towards viewing choices by black-box algorithmic recommendations. Digital platforms change the nature of information seeking from whimsical (or routinized) discovery into one where algorithms orchestrate a multi-faceted curation of relevant as well as irrelevant recommendations. In the process, the platforms assumes a de facto credentialing role in sorting, curating, and presenting personalized results to the users. The criteria employed by tech platforms encompasses what some scholars refer to as “public relevance algorithms”, in that they have replaced scientific expertise or credentialing methods in identifying what we need to know. At the same time, users are heterogenous in their information needs, and often may be facing algorithmic anxiety in steering through a bewildering ocean of content. Users are not always clear how platforms prioritize their information needs, or whether the platforms even understand what they are looking for.

The platform then performs two important functions in directing user attention: providing relevant information to users by assuming the role of an authoritative information provider and reducing the choice sets faced by users in navigating through a bewildering array of content and narrowing the cognitive overload faced by users. Viewers searching for widely prevalent medical symptoms on a platform such as YouTube could easily be led to a popular but irrelevant videos. The first function of the platform then is in providing a de facto set of credentialed healthcare information, while the second function of the platform could be that of reducing the cognitive overload faced by a user in navigating health directives that can be couched in hard to disentangle technical speak.

Herbert Simon defined an artifact as “an "interface" in today's terms between an "inner" environment, the substance and organization of the artifact itself, and an ''outer" environment, the surroundings in which it operates.” In today’s world, our sense of reality and set of choices are controlled or shaped by algorithmic artifacts, be it in comparison shopping or even in terms of how we think about what to buy or where to shop. Artifacts are embedded in a cognitive architecture where it must couple its inner environment with its outer environment by interfaces.

In the world we live in, algorithmic interfaces control what’s in Kathleen Kelly grocery list and where she buys it. Instead of being trapped in the wrong checkout counter, Kathleen now needs to navigate the vagaries of dynamic pricing when her Uber ride has a price surge2 or when Google re-routes her trip to the store. Her recommendation set and product consideration set are also shaped by the arcane and complex world of online ad targeting and re-targeting.

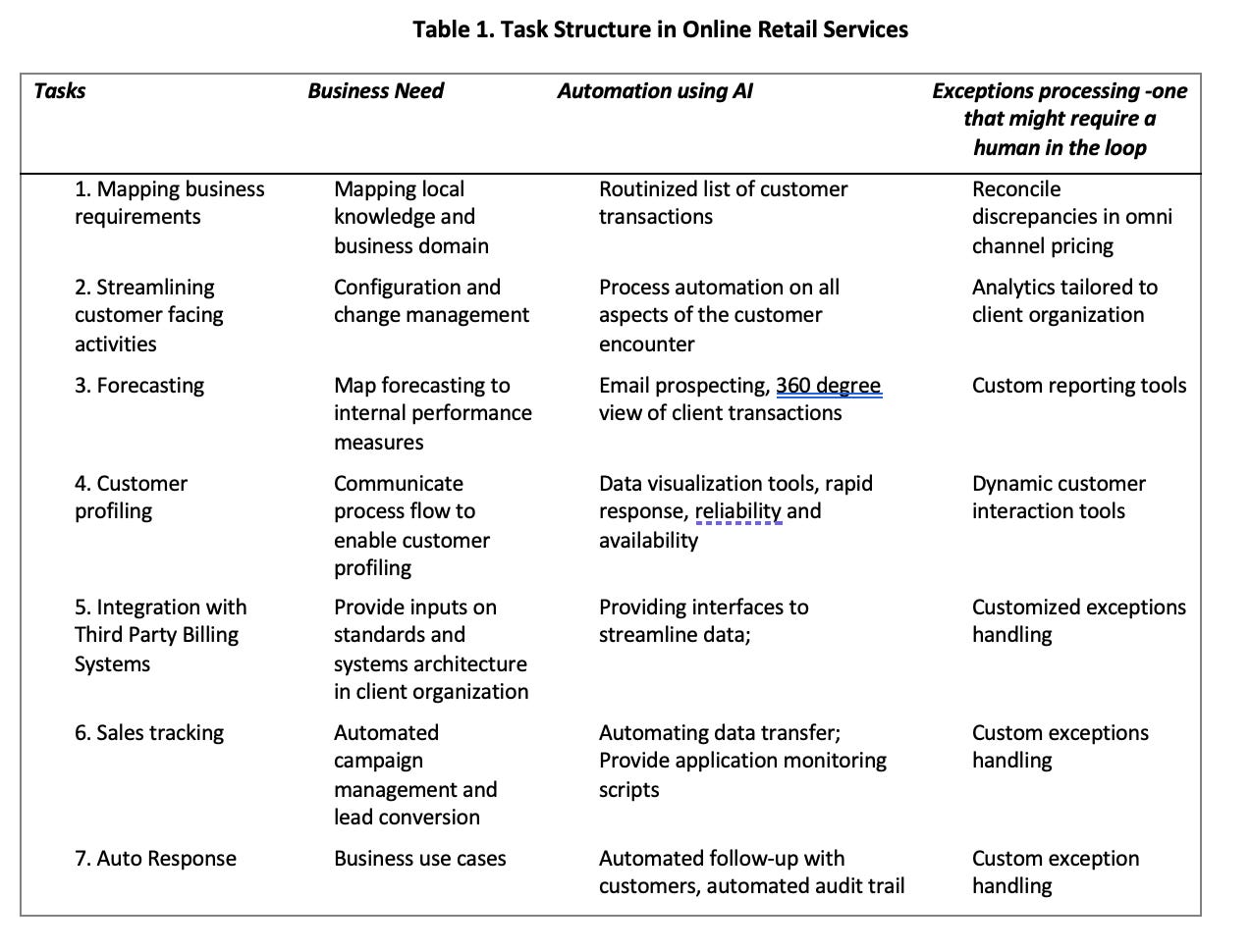

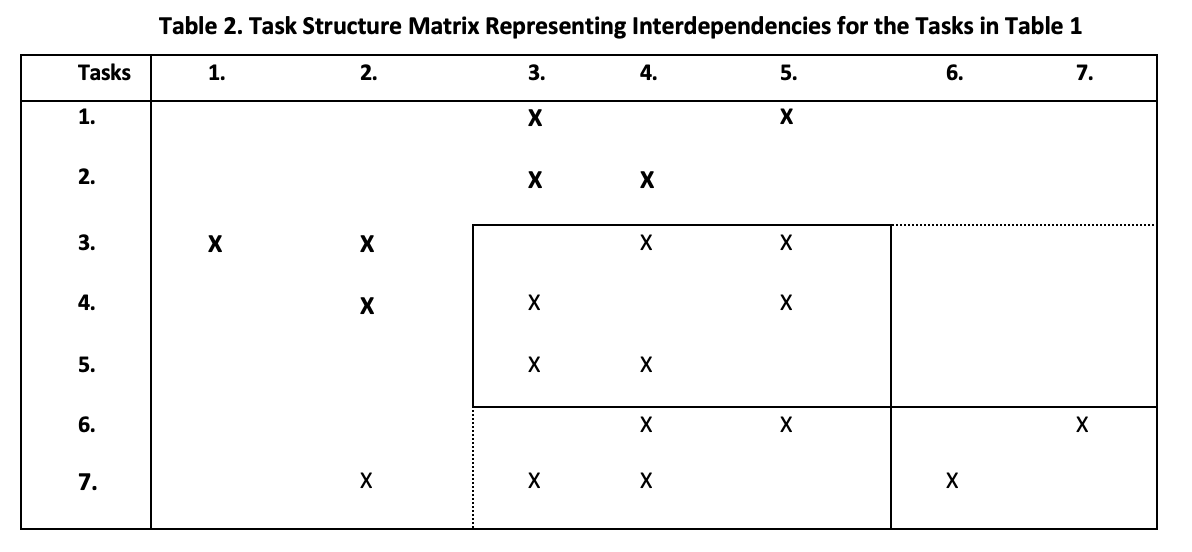

Below, I have a hypothetical scenario identifying task interactions and the role played by algorithmic interfaces in the context of online retail.

What is a good design consideration in this scenario? Is it reducing recommendation overload and algorithmic anxiety? Is it automation and efficiency in reducing throughput at a physical store? We need a clear planning horizon where the business objectives of an organization should also consider intangible good will.

As Herbert Simon says in the third edition of “The Sciences of the Artificial,” “The first generation of management information systems installed in large American companies were largely judged to have failed because their designers aimed at providing more information to managers, instead of protecting managers from irrelevant distractions of their attention. A design representation suitable to a world in which the scarce factor is information may be exactly the wrong one for a world in which the scarce factor is attention.”

From our current vantage point, algorithmic design choices we make need to make should consider the tradeoffs in bias and fairness, as well as evaluate the downsides of algorithmic anxiety. Once again, quoting Herbert Simon, “the task is not to design information-distributing systems but intelligent information-filtering systems.”

With greater scrutiny of algorithmic harms from agencies such as the FTC and lawmakers debating privacy protections through the American Data Privacy & Protection Act (ADPPA), we also need to consider how to shield customers from intrusive data gathering practices in the guise of improving consumer welfare. Last, but not the least, such a perspective (called the design science approach) centered around the artifact brings in a critical aspect that is often missing in current discussions about technology: artifacts as aesthetic objects.

The first time I bought an Apple product was a revelation. The revelation was not the device itself, which is a great product, but the way products were packaged. Starting with the Designed in California logo, the impossibly clean lines and minimalistic style as the product comes into view was dubbed by an industrial design magazine as a “ballet of unwrapping.” The Apple packaging, at least to me, represented performance that you expect from a chef unveiling his prize creation. Of course high-end retailers understood this for a long time. Witness Tiffany with their signature boxes. However, this was not something that technology companies were necessarily known for. Most of our waking hours are spent interacting with technological devices, and indeed with even multiple devices at a time! And yet, most of the high tech companies relegated design and user interaction to the sidelines.

Apple was one of the first technology companies to understand the consumerization of technology, and their culture was the anti-thesis of engineering with the emphasis on function and product features. Apple had designers who were solely responsible for designing a package. Steve Jobs in an interview said that the use of proportionately spaced fonts in Apple products comes from observing typesetting.

It is this synthesis of form and function, engineering as aesthetic art that is missing in current discussions of technology. It is perhaps not surprising since our algorithmically engineering world has morphed into a Matrix like algorithmic leviathan that takes everyone’s personal data and transforms us into a digital identifier where we are defining and recognizing people, objects and connections through the lens of predictive algorithms.

It doesn’t have to be this way. Technology can be beautiful and humanizing, AI and computers can be marvelous general purpose technologies that lets us explore ourselves, enabling connected individuals to scan for extraterrestrial intelligence, to decode the human genome, and to reduce digital health literacy disparities.

One of the most creative uses of AI I have seen in recent times is to re-imagine how city streets would be without cars and with more green features. The resulting pictures are not only visually appealing and suggest a much more livable feature than some of the soulless and largely deaden urban centers, but also suggests how AI could play a part in urban revival and in town planning

I can’t help thinking that part of the problem is also that engineering and liberal arts, especially in the US, seem to operate across an almost unbridgeable chasm. Engineers are parodied as social misfits, tongue-tied, lacking social graces and obsessed with Star Wars and video games. We need greater awareness of sociotechnical systems, a fusion of form and function, an understanding of the people, processes and business context that algorithms operate in.

https://www.forbes.com/sites/blakemorgan/2019/03/04/the-20-best-examples-of-using-artificial-intelligence-for-retail-experiences/

https://hbr.org/2021/09/the-pitfalls-of-pricing-algorithms