Recent years have seen a disruptive transformation of business using a range of Artificial Intelligence (AI) and Machine Learning (ML) methods. With AI/ML enabling the widespread adoption of automated decision making, it becomes necessary to inform various stakeholders, such as customers, regulatory agencies, employees, shareholders etc. to explain the methods and predictions from these AI/ ML models.

Explainable Artificial Intelligence (XAI) is a term coined by the Defense Advanced Research Projects Agency (DARPA) to refer to AI/ML systems that “enable human users to understand, appropriately trust, and effectively manage the emerging generation of artificially intelligent partners.” An industry interpretation comes from PwC[1] who recently defined Explainable AI (or ‘XAI’) as “a machine learning application that is interpretable enough that it affords humans a degree of qualitative, functional understanding, or what has been called ‘human style interpretations’.” The Computing Community Consortium (CCC), along with the White House Office of Science and Technology Policy (OSTP), and the Association for the Advancement of Artificial Intelligence (AAAI) have established a set of guidelines and definitions for fair and responsible AI.

“Fair AI” is defined as AI that seeks to ensure that the applications of AI technology lead to fair results, i.e., the use of AI should not lead to discriminatory impacts on people. Understandability denotes the characteristic of a model to make a human understand its function – how the model works – without any need for explaining its internal structure or the algorithmic means by which the model processes data internally.

The European Commission (EC) has published ethical guidelines for trustworthy AI that lists an assessment checklist for six different aspects for AI systems that should be addressed by those involved in creating AI: (i) human agency and oversight; (ii) technical robustness and safety; (iii) privacy and data governance; (iv) transparency, diversity, non-discrimination and fairness; (v) societal and environmental well-being; (vi) accountability.

Different Dimensions of Responsible AI

Responsible AI encompasses several dimensions of how AI is developed and used, including AI ethics, responsible AI, transparency of AI systems, etc. If a bank uses an AI system to determine whether a given loan should be approved or not, the prediction from the model alone is not enough. If an AI-powered system denies a person’s loan application, bank executives should have the ability to review the AI’s decision- making step-by-step to determine exactly where the denial occurred, as well as why the loan was denied. The user of such a system should also be able to explain why the method makes categorization or prediction in a certain way, and in a manner that is interpreted easily by a human rather than in terms of the type of machine learning method or its tunable parameters. For instance, if the bank is relying on historical data that is biased in terms of oversampling customers from a particular racial or demographic group, the decisions that result from such an automated method will also result in discriminatory outcomes. If implemented well, algorithmic lending might even reduce the biases that exist in current lending systems.

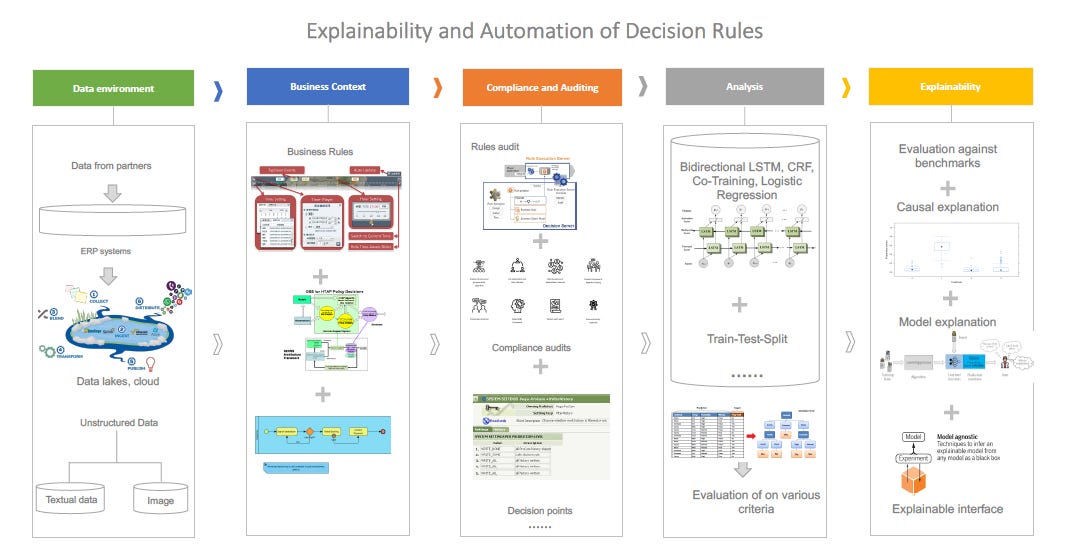

Figure 1 illustrates different dimensions of XAI. The focus in most AI/ML is on the analysis part, which refers to the part where the data is used to build models for a particular business context (the box titled analysis in Figure 1). XAI encompasses significantly more than building an automated decision model using AI/ML methods and needs an understanding of the verification and validation mechanisms and how users would interact with AI based models.

Organizations use data from multiple sources, use automated decision making in multiple business contexts, and these decisions are subject to compliance and audit requirements. Title VII of the Civil Rights Act of 1964 broadly prohibits hiring discrimination by employers and employment agencies on the basis of certain demographic characteristics. However, the current practice of AI/ML leaves open considerable ambiguity about how such Civil Rights protection applies to algorithms used for predicting hiring[2]. The current prescription for validity tests does not address the complex and inscrutable toolkit that is the staple of AI/ML models. Without active measures to mitigate them, biases will arise in AI/ML tools by default. Thus, the principles of XAI and ethical and fair use of AI go beyond the objective of building an automated system alone, but need to consider the regulatory and business environment the organization is operating in, the data environment, considerations of stakeholders etc.

Figure 1. An Illustrative Framework to Elucidate Different Components of XAI[3]

A design framework to address responsible and ethical AI should incorporate the following ingredients:

Responsible AI principles setting the values and boundaries of automated systems.

A set of questions and check points, ensuring that all responsible AI principles have been considered in the creation process

Tools that help answering some of the questions, and help mitigating any problems identified

Training, both technical and non-technical

A governance model assigning responsibilities and accountabilities

Challenges in Implementing Responsible AI

While the above framework represents a version of what is possible, there are substantial challenges in realizing this vision.

Stay tuned for my next post where I will explain the challenges in implementing Responsible AI!

Footnotes

[1] https://www.pwc.co.uk/audit-assurance/assets/pdf/explainable-artificial-intelligence-xai.pdf

[2] https://www.upturn.org/reports/2018/hiring-algorithms/

[3] This is an illustrative example I created.