AI/ML, Automation and Automated Decision Making

In our popular discourse, AI has been presented as a sort of magical transformation that can transform our lives, usher in miraculous improvements in well-being or alternately a dystopian universe where our human selves become reduced to mindless automatons. We are inundated with daily headlines such as “AI makes giant leap in solving protein structures” and “millions of jobs in danger of being replaced by AI”.

In reality, we have been in an era of automation and technology induced productivity change for a long time, and some of the changes ushered in by technology are not necessarily measurable by traditional productivity statistics. Rather, technological innovations could also expand consumer welfare and well-being and could result in intangible investments such as new business models, new businesses and services that would not exist without technology.

My objective is not to contribute to the discussion around what types of economic or societal changes are fostered by AI, but to contribute to an understanding of how we can harness AI in an equitable, transparent and responsible manner. To illustrate my point, I will provide examples from three different spheres of economic activity.

I want to introduce some terminology. The Encyclopedia Britannica defines artificial intelligence as “the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings. The term is frequently applied to the project of developing systems endowed with the intellectual processes characteristic of humans, such as the ability to reason, discover meaning, generalize, or learn from past experience.” The UK’s Information Commissioner’s Office defines automated decision-making as “the process of making a decision by automated means without any human involvement.” Automated decision making does not necessary involve the use of AI and can be accomplished using any technology such as decision support systems or automated call center/ sales force automation type of services.

A Brookings report outlines the difference between AI, which “denotes machine learning and machines moving forward on their own, versus auto-decisioning, which is using data within the context of a managed decision algorithm.” (https://www.brookings.edu/research/reducing-bias-in-ai-based-financial-services/). Machine Learning has been defined by IBM a branch of artificial intelligence (AI) focused on building applications that learn from data and improve their accuracy over time without being programmed to do so. At the risk of over generalization, most applications of AI that we hear in the popular press are really applications of machine learning. https://www.ibm.com/cloud/learn/machine-learning

For the purpose of this post, I will refer to all these -automation, automated decision making and AI interchangeably as AI.

Types of Biases: Disparate Impact

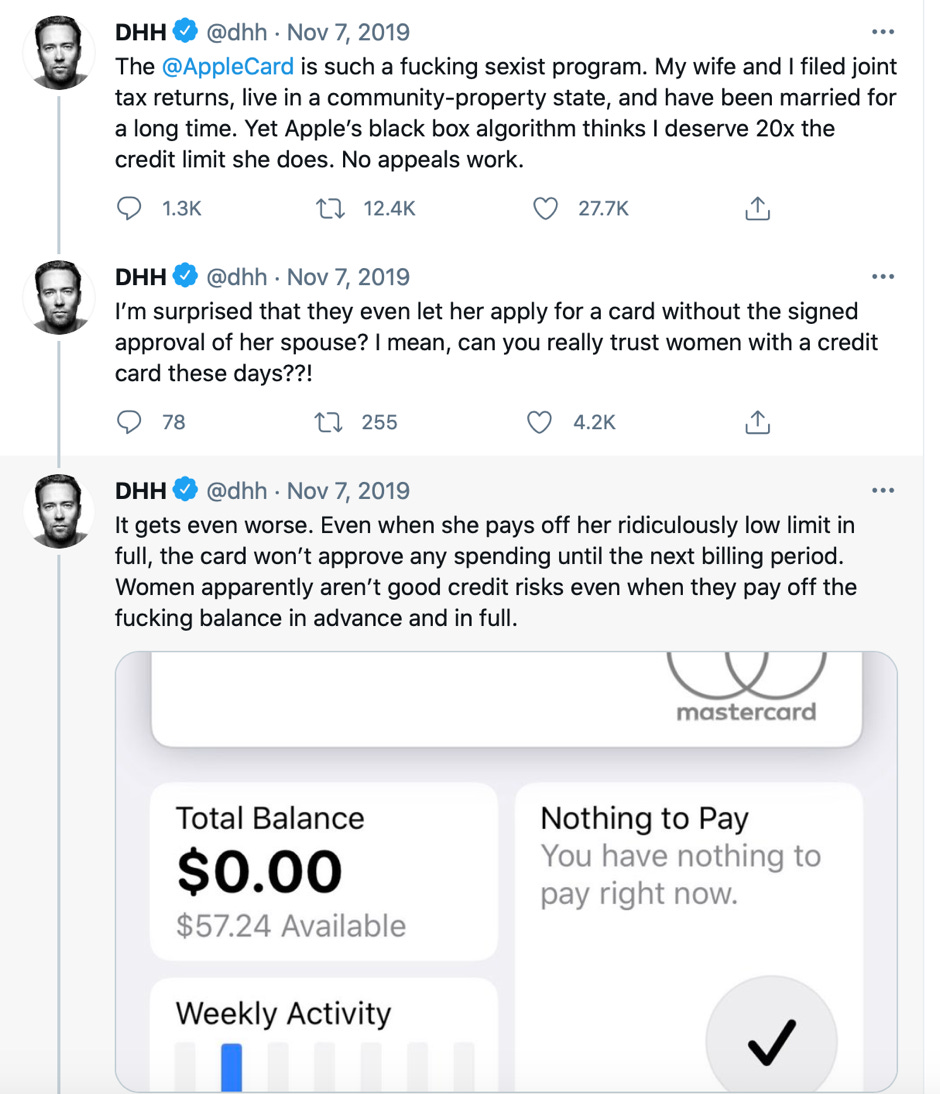

An area of economic activity that has seen widespread adoption of automated decision making is the world of finance, especially in consumer facing activities such as credit cards and consumer loans. In 2019, the New York State Department of Financial Services Services (NYSDFS) launched an investigation into Goldman Sachs for discriminatory profiling into a credit card jointly offered with Apple. A tech entrepreneur, David Heinemeier Hansson, posted in a viral tweet that card’s credit limit was 20 times higher than his wife’s. This sparked a social media firestorm, where many influential voices, including Apple co-founder Steve Wozniak, questioned why several couples residing in common property states were given such widely varying credit limits.

The NYSDFS issued a statement that state law bans discrimination against protected classes of individuals, “which means an algorithm, as with any other method of determining creditworthiness, cannot result in disparate treatment for individuals based on age, creed, race, color, sex, sexual orientation, national origin or other protected characteristics.”

This is not to solely place the blame on algorithms, since other methods of categorizing potential borrowers (using human input) could have flaws. A study from the Federal Reserve Bank of St. Louis found, “Credit score has not acted as a predictor of either true risk of default of subprime mortgage loans or of the subprime mortgage crisis.[2]”

However, the challenge with algorithmic decision making is, as succinctly summarized in a 2016 Treasury Department study, while “data-driven algorithms may expedite credit assessments and reduce costs, they also carry the risk of disparate impact in credit outcomes and the potential for fair lending violations.”[1]

What is more concerning is what is termed proxy discrimination. Prince and Schwarcz defined proxy discrimination as follows: “Unintentional proxy discrimination by AIs is virtually inevitable whenever the law seeks to prohibit discrimination on the basis of traits containing predictive information that cannot be captured more directly within the model by non-suspect data.”

Disparate Impact from Biases in Training Data

One way to think of artificial intelligence methods is that they are built to recognize patterns or generalize from samples of “training data”. A child can recognize quickly enough if an object is a cat or not a cat. How do we train a computer to do the same. The idea is that given a manually labeled datasets, say ones where the images are labeled as cat or not, machine learning algorithms such as neural networks can learn the representation (deep learning algorithms work by learning some underlying features that characterize cats and iteratively calculating the probability that a certain image is that of a cat) , and by tuning the hyperparameters that allow algorithms to learn more efficiently, we could mimic the process of human intelligence.

While my example is about deep learning, machine learning methods apply inductive logic in generalizing patterns from training data. Amazon deployed an AI-based resume screening tool that unwittingly was found to be biased against women. The Amazon screening tool aimed to automate resume screening for software developer jobs and other technical posts, where the challenge was that most resumes historically were submitted by men. The training dataset in this case was built in 2014 with a dataset of resumes submitted to Amazon over the preceding 10-year period. The hiring algorithm learned to penalize resumes that included words such as “women’s”.

Racial disparities and bias-aware AI

UnitedHealth Group relied on decision making that lowered the likelihood that sick black patients would receive high-quality care. A study published in Science found that the algorithm was less likely to refer black people than white people who were equally sick to programs that are meant to improve quality of care for patients with complex medical needs. This is because the manner in which risk scores were assigned to patients was on the basis of total health-care costs accrued in one year.

Taken in the aggregate, the average black person in the data set had a similar profile of overall health-care costs to the average white person. However, the average black person also was likely to have a greater prevalence of conditions such as diabetes, anaemia, kidney failure and high blood pressure [3].

In other words, looking at the health care costs accrued in a year could significantly understate the true health risks faced by the patient, and this was substantially more so in the case of black patients. This created a huge racial disparity in the manner in which healthcare was administered,

An investigation of Amazon found that Amazon excluded minority neighborhoods in Boston, Atlanta, Chicago, Dallas, New York City, and Washington, D.C., from its Prime Free Same-Day Delivery service while extending the service to white neighborhoods. Similar criticisms were made about biases in facial recognition software (more on that in a future post).

Big Data and Algorithmic Transparency

One of the fundamental realities of the world we live in is that Big Tech companies as well as finance and banking giants have an incredibly detailed, and I should say, intimate portrait over vast swathes of our lives. Shoshanna Zuboff and others have termed this phenomenon surveillance capitalism.

We leave digital traces of our movements every time we use Google, shop online or at the store, or even when we use personal health monitoring apps. There is a huge ecosystem that harnesses all this data, and cutting edge applications of AI/ML are being developed to predict consumer behavior through a detailed personalized profiles which are then used to microtarget users. Whether it is health, education, leisure or banking, businesses mine enormous data about all of us. As a WSJ article warned us, “Facebook Likes means a computer knows you better than your mother.”

In an interview, Shoshanna Zuboff shared the result of a ProPublica investigation[4] that “breathing machines purchased by people with sleep apnea share usage data to health insurers, where the information is then used to justify reduced insurance payments.”

Concerns about privacy and transparency aside, the movement towards AI (and Big Data) powered economic and social transformation is continuing unabated, with industry leaders cheerleading mantras such as “data is the new oil”. Thought leaders such as Kai Fu Lee celebrate the brute force methods of learning inherent in deep learning.

Black Box AI Models

In my opinion, there are three challenges in implementing fair and bias-aware algorithmic decisionmaking practices. All three stem from the fundamental inscrutability of algorithmic methods deployed over gigantic treasure troves of consumer data.

The first barrier is that we need bias-aware metrics. A ProPublica investigation into algorithms used to predict recidivism, and thereby the type of sentencing given by the criminal justice system, found that the scores predicting the likelihood of individuals committing a future crime is racially biased against Black people. In particular, The formula was particularly likely to falsely flag black defendants as future criminals, wrongly labeling them this way at almost twice the rate as white defendants. White defendants were mislabeled as low risk more often than black defendants. A study published in the journal Science Advances analyzed the data from the Correctional Offender Management Profiling for Alternative Sanctions (COMPAS) tool used for criminal risk assessment to examine whether these algorithms are fundamentally better than untrained humans at predicting recidivism in a fair and accurate way. They found that, while COMPAS employs about 137 features about an individual and the individual’s past criminal record, the same accuracy could be achieved with a simpler classifier that employed only two features. Studies such as this and the investigations by Pro Publica show the need for fairness benchmarks and measures of bias as well as bias-sensitive recommendations and profiling.

The second barrier is obfuscation when the working of an algorithm is a black box. In his book, Black Box Society, Frank Pasquale[5] identifies how norms of trade secrecy, use of proprietary technologies and administrative safeguards can interfere with transparency. Individuals’ right to understand how their information is employed by companies, and how algorithmic decision making impacts what information they see, what credit offers they get, or even whether they have knowledge of employment related opportunities. Algorithmic governance and algorithmic decision making can then mask or muddy discriminatory intent or disparate impact. For trade reasons, companies have a right to legal secrecy that imposes the obligation of keeping proprietary algorithms secret. As algorithmic regulation and algorithmic governance becomes pervasive, the movement towards algorithmic transparency could be undermined by legal secrecy and obfuscation in a variety of digitalized transactions where the decision-making by the technology platform or business is opaque to the end user.

Frank Pasquale defines obfuscation as “deliberate attempts at concealment when secrecy has been compromised.” Algorithmic transparency rules might mandate disclosure and transparency. Yet, as an example from banking shows, investors would likely “have to process thousands of pages of complicated legal verbiage” in order to comprehend the nitty gritty and minutiae of transaction processing rules. Rulings relating to algorithmic transparency might be more challenging in the high tech context. Obfuscation cannot always be addressed through appropriate audits.

The third challenge is that of contextual specificity. In recent years, there have been substantial efforts to make ML models interpretable wherein it becomes easier for someone who has been subject to algorithmic decision making understand how their creditworthiness, say, was calculated. A Federal Reserve Governor described the lack of transparency thus: “Depending on what algorithms are used, it is possible that no one, including the algorithm’s creators, can easily explain why the model generated the results that it did.”

The encouraging news is that the push for transparency has been aided by the legal system in addition to pressure from consumer rights activists. A Dutch court ruling ordered Ola to be more transparent about the data it uses as the basis for decisions on suspensions and wage penalties for gig workers.

All these issues I have identified need to substantially go beyond de-biasing training data. Most algorithmic audits are only measuring if the system is working as it is intended. However, that excludes complex socioeconomic conditions and iniquities that are present in society. Addressing those need a fundamental rethink to consider fairness and iniquity. It needs to be noted that interpretability of models and fairness are not the same thing, but instead there is an active tradeoff in making AI methods interpretable vs. fair. Some scholars have proposed a notion of counterfactual fairness, where a decision is considered fair if it is the same in the actual world as it would be in a counterfactual world where the individual belonged to a different demographic group [6]. Any bias aware method also needs to be able to implement fairness and explainability methods at scale.

What makes AI systems attractive to businesses is they offer the benefits of large-scale automation. Netflix producing hit shows, Uber showing drivers nearest to us, Amazon’s proprietary algorithms which optimize everything from routing to picking in warehouses. In the process, we as consumers have enjoyed the benefits of convenience, greater product variety, etc. However, as a society we have to collectively decide to what extent we are comfortable sacrificing our right to an explanation and our right to be treated equitably and fairly in return for convenience and personalization.

Endnotes

[1] See also David Skanderson and Dubravka Ritter, "Fair Lending Analysis of Credit Cards, Payment Card Center Federal Reserve Bank of Philadelphia," August 2014 at http://www.philadelphiafed.org/consumer-credit-and-payments/payment-cards-center/publications/discussion- papers/2014/D-2014-Fair-Lending.pdf.Robert Avery, et al. “Does Credit Scoring Produce a Disparate Impact?” Staff Working Paper, Finance and Economics Discussion Series, Divisions of Research & Statistics and Monetary Affairs, Federal Reserve Board, October 2010, available at https://www.federalreserve.gov/pubs/feds/2010/201058/201058pap.pdf.

[2] https://www.americanbanker.com/news/can-ai-be-programmed-to-make-fair-lending-decisions

[3]Obermeyer, Z., Powers, B., Vogeli, C. & Mullainathan, S. Science 336, 447–453 (2019)

[4]https://www.propublica.org/article/you-snooze-you-lose-insurers-make-the-old-adage-literally-true

[5] Frank Pasquale, The Black Box Society (Harvard University Press, 2015). See also Danielle Citron and Frank Pasquale, The Scored Society, Wash. L. Rev. (2014)

[6] Kusner, M. J., Loftus, J. R., Russell, C., & Silva, R. (2017, March). Coun- terfactual Fairness. arXiv e-prints, arXiv:1703.06856.